[ad_1]

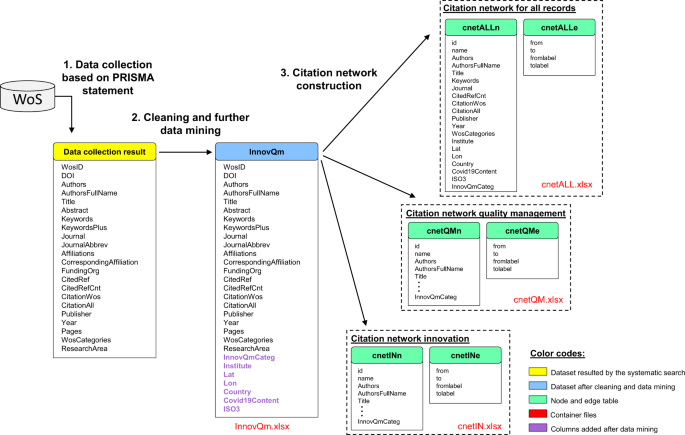

Database building was performed in three phases: (1) gathering knowledge from the Internet of Science (WoS), (2) cleansing and knowledge mining (preprocessing) and (3) establishing quotation community nodes and edge tables. Fig. 1 exhibits the database building framework.

Knowledge assortment

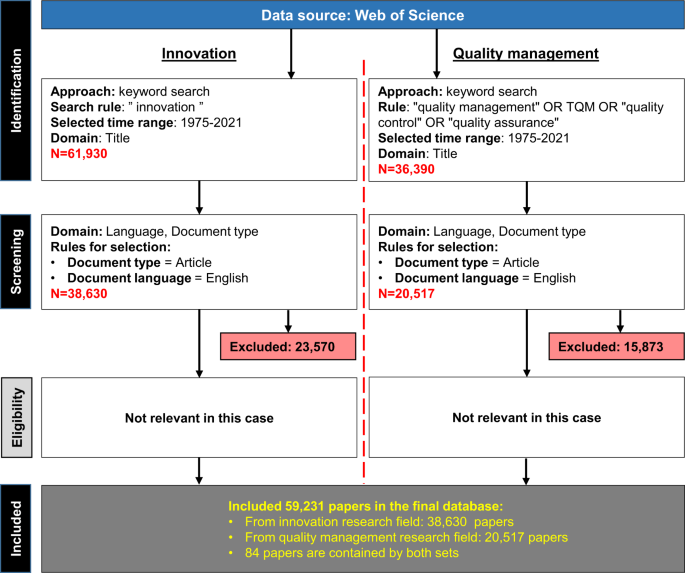

Knowledge assortment was carried out following the PRISMA methodology proposed by20. This technique supplies steering to students in conducting systematic literature critiques by following the 4 proposed steps: (1) identification, (2) screening, (3) eligibility and (4) included. The PRISMA methodology was chosen because the framework of the information assortment and filtering as a result of following benefits: it supplies a complete and clear course of; it’s relevant in any analysis subject; and it strongly helps the reproducibility of the evaluate. Moreover, a course of move diagram (as additionally contained by PRISMA) helps readers to raised perceive the general course of and the boundaries of the research and might improve the standard of the literature evaluate21. The search was performed individually for the 2 areas of curiosity, and the outcomes have been mixed to offer the complete bibliometric dataset. Fig. 2 exhibits the information assortment course of.

A key phrase search was utilized in each subjects on the WoS platform. The search was performed solely on article titles to reduce the inclusion of nonrelevant papers that solely point out the phrases throughout the summary associated to innovation or high quality administration. The complete time vary was analyzed (the primary obtainable 12 months in WoS was 1975) till the date of information assortment, which occurred on 22 September 2021. Relating to the language of the paperwork, English was thought of because the lingua franca of scientific publication22. Within the case of the innovation subject (left aspect of Fig. 2), 61,930 information have been discovered after the key phrase search within the specified time interval. This quantity was diminished to 38,630 after making use of the abovementioned filtering guidelines by excluding 23,570 papers. Within the case of high quality administration (proper aspect of Fig. 2), 36,390 papers have been discovered by the key phrase search, and this quantity was diminished through the screening part to twenty,517 by excluding 15,873 papers as they have been paperwork with non-English language or have been sorts apart from articles. On this paper, step “Eligibility” is just not related as a result of the variety of ensuing knowledge information doesn’t make it potential to manually learn and consider all of the screened papers. After the appliance of the steps proposed by the PRISMA methodology, 59,231 screened papers have been collected into the database.

Cleansing and knowledge mining

The purpose of this step was to increase the dataset with further worthwhile variables such because the institute of the primary creator, nation of the primary creator, ISO3 nation code, COVID-19 content material, geographical coordinates and matter indicator (innovation or high quality administration). The extra variables have been supplied primarily based on the next:

Institute of the primary creator (Institute)

The column “Affiliations” supplied by WoS was used to extract the primary creator’s affiliation. These knowledge have been saved initially as a steady string together with all of the authors’ names and affiliations. An extra drawback was that the identical authors from the identical affiliation have been dealt with as one entity throughout the string, and their affiliations didn’t observe the identical format and construction in all circumstances. As a consequence of this unstructured nature, textual content cleansing and textual content mining wanted for use to extract the required info. To return the primary creator’s affiliation, first, common expressions have been used to take away the pointless substrings; second, time period shortenings have been changed by their full kinds (corresponding to “College” as an alternative of “Univ.” or “Division” as an alternative of “Dept.”). Lastly, the cleaned string was tokenized to separate the precise components of the affiliation, such because the institute title, metropolis, avenue deal with, and nation. These steps have been carried out utilizing the Python program language.

Nation-related columns (Nation, ISO3)

Utilizing the preprocessed column “Institute”, the nation of the primary creator’s affiliation was extracted as a part of the cleaned string. Not solely have been nation names extracted, however their ISO3 codes have been mapped. Since a number of statistical applications and packages (corresponding to R) establish international locations primarily based on ISO codes, this step makes straightforward identification potential for statistical packages with out the necessity for additional mapping effort by the researcher.

COVID-19 content material (Covid19Content)

To spotlight whether or not a paper was written within the context of COVID-19, a key phrase search was used, and the worth of the column was set to 1 if the title, the key phrases or the summary contained not less than one of many following key phrases: “COVID”, “coronavirus”, “pandemic”, or “SARSCoV2”. In any other case, its worth was set to zero.

Geographical coordinates (Lat, Lon)

Latitude and longitude values associated to the primary creator’s affiliation have been retrieved by geocoding utilizing the GeoPy Python package deal. Geocoding was carried out utilizing the extracted and tokenized column “Establishment” as enter values.

Search class indicator (InnovQMCateg)

This column was manually added when combining the outcomes from each searches as described by Fig. 2. If a particular paper was collected completely by the innovation-related search, its worth was set to “innovation”, and the class title “high quality” signifies that the paper could be discovered completely within the high quality administration search outcomes. Lastly, the intersection was denoted by the class title “each”. On this case, duplicated information have been faraway from the information desk.

Quotation community building

The node and edge tables have been generated utilizing the collected and additional processed dataset from WoS. Within the core dataset, the cited articles have been saved in a single column in string format. To assemble the sting listing format from the string-type enter variable, RegEx (common expressions) instructions have been utilized to seek out all of the DOI numbers showing throughout the lengthy textual content. After extracting the cited DOI numbers, a listing format was constructed. The sting listing building course of could be described as follows:

-

1.

Choose paper i

-

2.

Extract all DOI numbers from the string of cited references utilizing common expressions

-

3.

For all of the extracted DOI numbers: add DOIi – DOIj pairs to the sting listing (the place j is the jth component of the extracted cited DOIs for paper i)

-

4.

Iterate steps 1–3 via all of the papers (DOIs) throughout the core dataset.

The development was carried out within the Python program language utilizing re, NLTK, NumPy and pandas packages.

[ad_2]

Source link